A few months ago I wrote about how the audience for our codebases is changing. The short version: the primary consumer of your code is no longer you or your teammates. It’s an AI agent. And if we keep optimizing our workflows for human readers while an agent does most of the heavy lifting, we’re making candles in a world that needs light bulbs.

I got a lot of good feedback on that piece. Some people agreed. Some people thought I was overthinking it. A few asked the obvious follow-up question.

“Okay, so what does a light bulb workflow actually look like?”

Fair question. Here’s what I did about it.

The Problem I Kept Running Into

I work on multiple codebases at BrandCast. I am the engineering team. Claude Code and Gemini CLI are my pair programmers, and they are fast. They are tireless. They will write 400 lines of code in the time it takes me to refill my coffee. I also run a lot of quick demo and customer consultation work for my day job. Lots of context switching. Lots of different constraints and requirements. Lots of starting from scratch.

Gemini and Claude have the memory of a goldfish. My memory usually has a consistency of a loose tapioca pudding.

Every new session starts from scratch. The agent doesn’t remember that we decided on a specific naming convention yesterday. I’m coming in hot from a customer meeting with 30 minutes to check an idea I’d been mulling over for BrandCast. I don’t have time to re-read the architecture docs and re-explain the constraints. I just want to start hacking. It doesn’t know we already tried approach X and it broke the build. It hasn’t read the architecture docs sitting ten directories deep in the repo. And when it finishes a task, there’s no record of what it changed or why beyond a commit message that says “update components.”

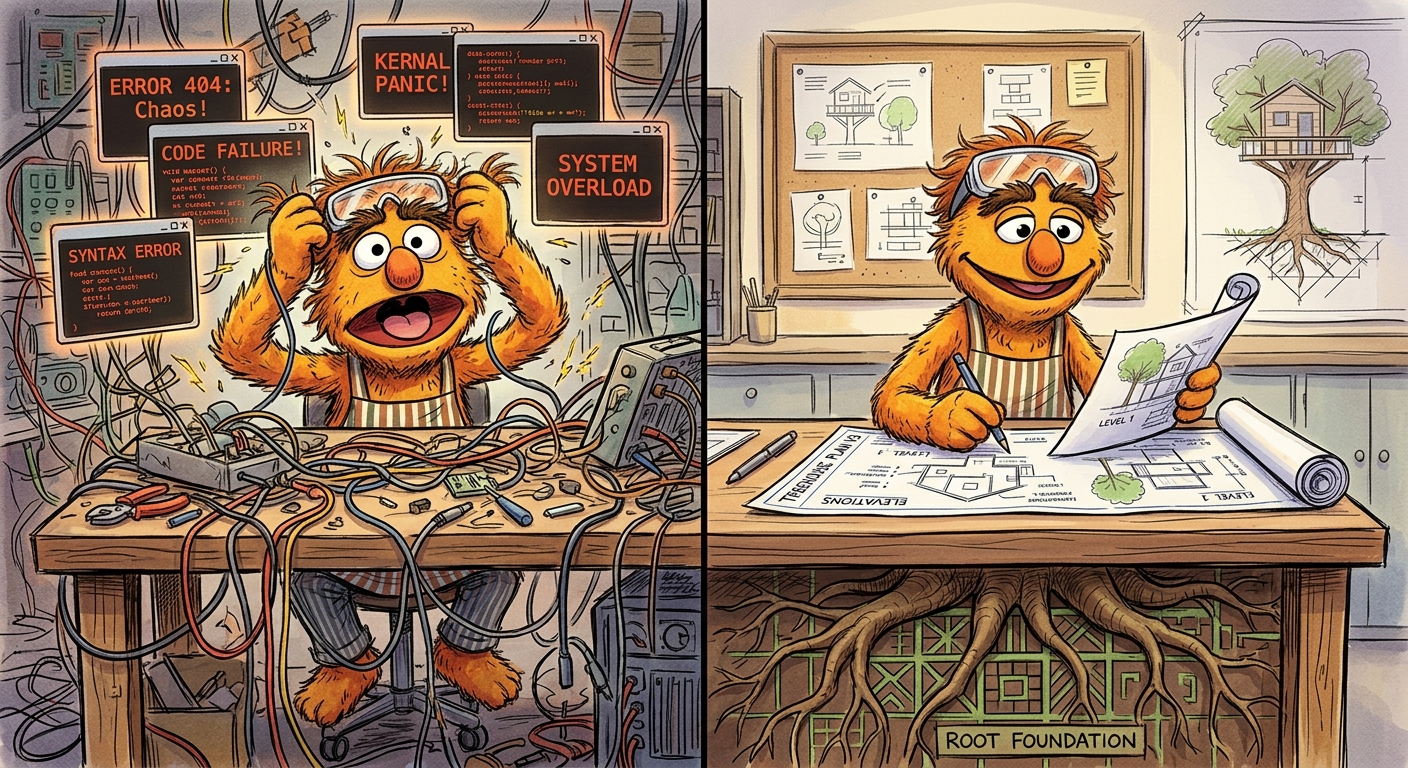

I found myself doing the same work over and over. Re-explaining context. Re-establishing guardrails. Catching the same categories of mistakes. The agents were productive. The workflow around them was not. My frustration grows. Suddenly I’m typing in all caps and cursing at Gemini and Claude.

And it wasn’t the agents’ fault. I was asking them to work inside a process designed for humans. They needed something different.

The Candle Factory

There’s a quote I like to butcher with people. It’s commonly attributed to Oren Harari:

“The electric light did not come from the continuous improvement of candles.”

Most AI coding tools today are being used like better candles. Autocomplete in your IDE. Inline suggestions. Chat sidebars. They bolt AI into the workflows and tooling we’ve built over the last 25 years, and for a lot of tasks, that works fine. There is nothing wrong with a good candle.

But if you’re building production software where an AI agent is doing the majority of the implementation, those workflows start to feel slightly out of place. Andrej Karpathy called the freeform approach “vibe coding,” and honestly, for prototypes and weekend projects, it’s great. Give in to the vibes. Let the agent run.

Production code is different. Recent analysis from Ox Security found that AI-generated code introduces 1.7x more issues than human-written code, with logic errors running 1.75x higher. That tracks with my experience. The agent is fast and capable, but without guardrails it will confidently produce code that passes a glance test and fails in production.

The 2025 Stack Overflow Developer Survey tells an interesting story here. 84% of developers are using or planning to use AI tools. But positive sentiment actually dropped from the previous year. People are adopting these tools and then running into the same friction I was hitting. The tools work. The workflows around them don’t.

So I Built Root

Root is a development workflow framework. It works as both a Claude Code plugin and a Gemini CLI extension. Same commands, same agents, same hooks, same templates. I use both tools daily and I didn’t want to pick sides.

Here’s what it does, explained through the problems it solves.

Not every change needs a PRD. But some really, really do.

Root classifies work into two tiers automatically. Tier 1 is features and refactors. Those get the full treatment: a PRD, an implementation plan with a dependency graph, parallel agent execution across specialized teams, and automated validation before you can call it done. Tier 2 is bugs and small changes. Those get lightweight inline planning and a single commit. The insight is that structure should scale with complexity. A typo fix and a database migration should not go through the same process.

The agent remembers what happened last time.

Five hook scripts track your session state. What files were edited. What docs were read. What the GitHub issue context was. When a session ends, you get a receipt. When a new session starts, that context is available. The goldfish gets a memory.

The agent actually reads the docs.

Root runs a local RAG database using an MCP server. It indexes your project documentation with a local embedding model. No external API calls. When Root loads context for a task, it pulls in the relevant docs automatically. The agent doesn’t need you to paste in the architecture overview. It already found it.

One of the I’m quickly discovering with truly agentic AI development is that the quality of the output is directly proportional to the quality of the input. The better the context, the better the code. Docs are king.

Different jobs need different agents.

Root ships eight agent templates. Four team roles: an architect who plans but can’t edit, an implementer with full access, a reviewer who checks against the plan, and a tester. Four specialist roles you can customize per project: backend, frontend, database, and devops. The right agent gets the right constraints for the right job.

It works with whatever you’re using.

Claude Code and Gemini CLI have different hook systems, different event models, different configuration formats. Root abstracts all of that. Write your workflow once, run it in either harness. If a better tool comes along next year, Root can adapt without throwing away your entire workflow setup.

Maybe Antigravity or Cursor will work one day. I’m an old Linux nerd. I like my terminal.

The Part Where I’m Honest About What’s Hard

Root is at version 1.6.7. I woudln’t call it “GA”, but it’s also not a fresh prototype. It’s opinionated. It works well for how I work, which is a solo developer running multiple codebases with AI agents doing most of the implementation.

If you have a team of ten and an established CI/CD pipeline and a working code review culture, Root might be more process than you need. Or it might not be the process that you like. Or it might fill a gap you didn’t know you had. I genuinely don’t know yet. That’s part of why it’s open source.

The RAG system works well for projects with existing documentation. If your project has no docs, the RAG layer doesn’t have much to offer. (Though Root’s documentation health tools can help you bootstrap that.)

I spent most of the past weekend finding and filling documentation gaps in my BrandCast monorepo using root. I won’t say it was fun. But I’m way more confident now when I tell Gemini or Claude that we’re going to work on some github issue that it’ll find the context faster and with better precision.

Lastly, the dual-harness approach means I’m maintaining compatibility across two different AI tool ecosystems that are both evolving fast. Things break sometimes. I fix them. That’s the deal.

Give It a Look

Root is MIT licensed and lives on GitHub. It’s part of the BrandCast agent family alongside Chip (AI support), Twig (AI design), and Bark (Ops/SRE). But it’s also installable as a standalone Gemini CLI Extension or Claude Code plugin. To get started you install the extension or plugin using you normal Gemini or Claude Code workflow.

Then you type:

/root:initThis creates a local config file, a couple of artifacts and walks you through what it should ingest into the documentation RAG. Once that’s done I’ve discovered you need to restart the harness to make the configs take affect cleanly. And you’re off and running. You can start a full planning workflow:

/root let's work on this thing...Or you can query the docs directly:

/root:docs search what is the auth flow for the admin panel?note: Claude handles this search a little more cleanly right now than Gemini. I’m working on the prompt.

If you’re building production software with AI agents and you’re tired of re-explaining context every session, losing track of what changed, or watching your agents make the same mistakes on repeat, Root might help.

Or at the very least, it might give you ideas for your own light bulb.