There is a quote bouncing around LinkedIn this week from Eric Schmidt, the former Google CEO, saying he has seen programming start and end in his lifetime. He has been doing this for fifty-five years, he says, and now “every aspect of the programming that I did, every aspect of the design is now done by computers.” The headline writers did what headline writers do. The end of coding. The death of the programmer. 2026 is the year the craft disappears.

Eric Schmidt is a very smart person. I have never had the chance to work with him and I am not about to pretend I know more than he does about the industry. But the framing is sloppy, and I think it is worth pushing back on, because “end” and “evolve” are two very different words.

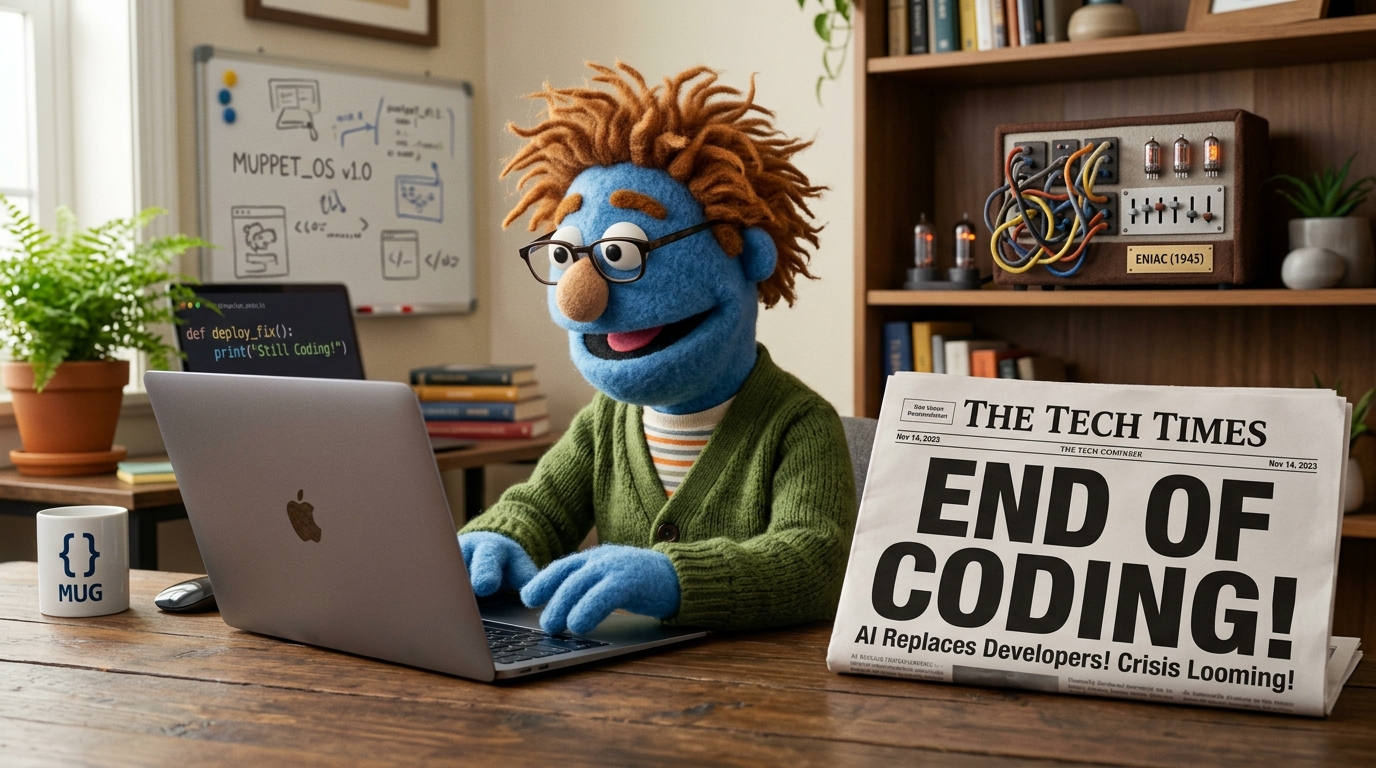

The 1945 programmer would already think we ended

Consider what programming looked like when ENIAC came online. You did not type code. You physically rewired the machine, running cables between adders and multipliers and setting portable function tables full of ten-way switches. Six women, the original ENIAC programmers, would take a problem worked out on paper and spend days getting it loaded into the hardware. That was programming. Cables and switches.

Now imagine one of them time-traveling to 1985 and watching a high schooler in a Chicago suburb type FOR I = 1 TO 10 on a beige Apple IIe. They would not think “ah yes, the craft continues.” They would think they were looking at sorcery. The cables are gone. The switches are gone. Nobody is moving anything physical. The machine understands English-adjacent words.

That is the same gap Schmidt is describing when he watches an agent write code today, except now he is the ENIAC programmer, and the high schooler has a Claude tab open.

A partial list of times coding already “ended”

- The 1940s and early 1950s, when programming meant moving cables and replacing vacuum tubes. The end of coding arrived when the first assembler let you type

MOVinstead of toggling it in by hand. - The late 1950s and early 1960s, when FORTRAN and COBOL let you write equations and sentences instead of assembly. A whole generation of “real” programmers were convinced the kids using high-level languages did not actually know what they were doing.

- The 1970s and early 1980s, when C and Unix started crystallizing the stack most of us still recognize. If you had spent your career on IBM mainframes with their peculiar word sizes and EBCDIC character encodings, this was a second extinction event.

- The 1980s, when Windows happened. I think most of us can agree that Windows was, to borrow from Douglas Adams, the sort of thing that made a reasonable person suspect humans were still so amazingly primitive they thought digital watches were a pretty neat idea.

- The mid-1990s, when the web ate software. Suddenly the interesting problems were in a browser, and a huge swath of desktop expertise became a backwater overnight.

- The late 2000s, when

git push heroku mainkilled the sysadmin-as-gatekeeper. If you were still running your own bare metal because that was the only serious way to do it, congratulations, you had just become a specialist. - Sometime around 2015, when Stack Overflow plus Docker plus a decent IDE meant a motivated developer could ship things they would have needed a team to ship a decade earlier.

If a programmer from 1945 watched Ferris Bueller’s Day Off, they would think the modem scene was magic. And Ferris is just pulling absence records out of a school district database. The “end of coding” has been happening on a rolling basis for eighty years. It is called evolution.

Schmidt actually said the quiet part

Here is the thing the headlines left out. In the same set of remarks, Schmidt also said that “computer science is not going away. The computer scientist will be here, at least until the computer scientist gets replaced. We’ll be supervising this.” He is not actually predicting the extinction of developers. He is predicting a shift in what the job looks like. Which is, you know, what has happened to this job roughly every ten years since Grace Hopper wrote the first compiler.

Specifications, evaluation criteria, supervision of systems, debugging at a higher level of abstraction. That is still development. It is just not moving cables around on ENIAC.

What is actually happening

I use agentic coding tools every day. They are genuinely good at some things and genuinely bad at others. They can churn through boilerplate, refactor tedious code, and explore a codebase faster than I can. They cannot, in my experience, figure out what you actually should be building. They cannot tell you whether the feature makes sense. They cannot sit in a room with a customer and hear the thing the customer is not saying.

The practitioners I trust most are not panicking about this. They are getting leverage from it. Simon Willison has been documenting this shift in careful detail for a couple of years now, and his take is closer to “this is a power tool, learn to use it” than “pack up your IDE and go home.”

The developers who will struggle are not the ones whose jobs are “ending.” They are the ones who defined themselves by typing speed, by memorized syntax, by being the only person on the team who knew the incantation. Those were never the interesting parts of the job anyway.

Dodge the hype, focus on the value

My mantra on this stuff has not changed. When a big name says something provocative on a podcast, ask what the actual claim is, strip off the headline, and figure out whether it changes what you should do tomorrow.

Schmidt’s underlying point is that the center of gravity of software work is moving. Fine. I agree. The tools are getting better, the expected unit of work is getting bigger, and the person who figures out how to direct these systems well is going to get a lot more done than the person who does not. That is worth paying attention to. That is worth learning.

But “coding is ending” is not a useful thing to plan a career around. It is a useful thing to get clicks on LinkedIn. You and I are not in the clicks business. We are in the shipping business, and developers are not going away in 2026, or 2027, or any time soon. They are being handed a bigger hammer.

Which, historically speaking, has happened about every ten years for the last eighty. Keep swinging.